LLM Delimiters and Higher-Order Expressions

Delimiters Between First-Order and Higher-Order Expressions

In a previous article (that I recommend reading for context), I introduced the general idea that delimiters marking transitions between first-order and second-order expressions are fundamental to all languages that convey meaning. These ideas were applied to human languages, programming languages, and even genetic code.

In a nutshell,

First-order expressions are evaluated

Second-order expressions serve a different purpose (e.g., they are interpreted, executed, etc.)

They use the same symbols, and therefore need delimiters that mark the transition between these categories of expressions

As an example, in the following sentence

She told him: “it is raining outside.”

She told him is a first-order expression

It is raining outside is a second-order expression

The quotation marks are the delimiters

In this article, I suggest extending this principle to LLMs. More specifically, how are delimiters being used so that LLMs can differentiate between first-order and second-order (or, more generally, higher-order) expressions? Also: why do they “break” models when they are misused?

LLM Delimiters

The de Facto Need for Delimiters by Model Creators

The role of special tokens in LLMs illustrates this idea neatly. By using these special tokens, LLMs can differentiate between raw text, which is treated as first-order expressions, and text with a specific meaning or purpose, which is represented as higher-order expressions. For instance, the tokenizer injects these tokens into the input sequence to mark where context-dependent information begins or ends. They can be practically assimilated to reserved words.

It is interesting to see, across LLM models, the general need for pairs of delimiters to differentiate the function of the text. Mistral AI uses control tokens ([INST], [/INST], etc.). DeepSeek also uses special tokens; notably, its reasoning process is enclosed between <think> and </think> (pdf, p. 6). Llama uses this approach too—for instance: <|begin_of_text|> and <|eot_id|>—, as well as “reasoning tags” since version 3.2.

LLM Delimiters in Practice

Now, let’s focus on a specific pair of delimiters used by Llama 3:

<|start_header_id|>{role}<|end_header_id|>: These tokens enclose the role for a particular message. The possible roles can be: system, user, assistant.

These delimiters make the LLM apprehend the text enclosed between them as a meaningful role for the model and not a mere textual representation of a role.

Example:

<|begin_of_text|><|start_header_id|>User<|end_header_id|>Translate: Bonjour !<|eot_id|>

The first pair of delimiters (<|begin_of_text|>, <|eot_id|>) indicates to the LLM that this is a prompt to which it will have to reply. The second pair of delimiters (<|start_header_id|>, <|end_header_id|>) indicates to the LLM that the prompt comes from the user. This brings a special meaning to the model. Here, it apprehends the token “User” as a user-as-a-role.

This is radically different from this fictitious example:

<|begin_of_text|>(User) Translate Bonjour !<|eot_id|>

In this statement, “User” has the same “cognitive” value as “Translate Bonjour !”.

This example also highlights the fact that, in the context of artificial intelligence, there can be higher-order expressions with different layers of purposes:

<|begin_of_text|>marks the transition to the beginning of a piece of information (transition to a first higher-order expression)<|start_header_id|>marks the (immediate) transition to tokens meaning a role (transition to a second higher-order expression)Useris a higher-order expression (role)<|end_header_id|>marks the end of this higher-order expressionTranslate Bonjour !is another higher-order expression (prompt)<|eot_id|>marks the ends of this higher-order expression

The <think></think> DeepSeek delimiters are the most recent illustration of the very same mechanism. They tell the model to “reason” about the expression enclosed by them.

Breaking Models With Delimiters (Or: Using Delimiters in First-Order Expressions)

Use And Abuse of LLM Delimiters

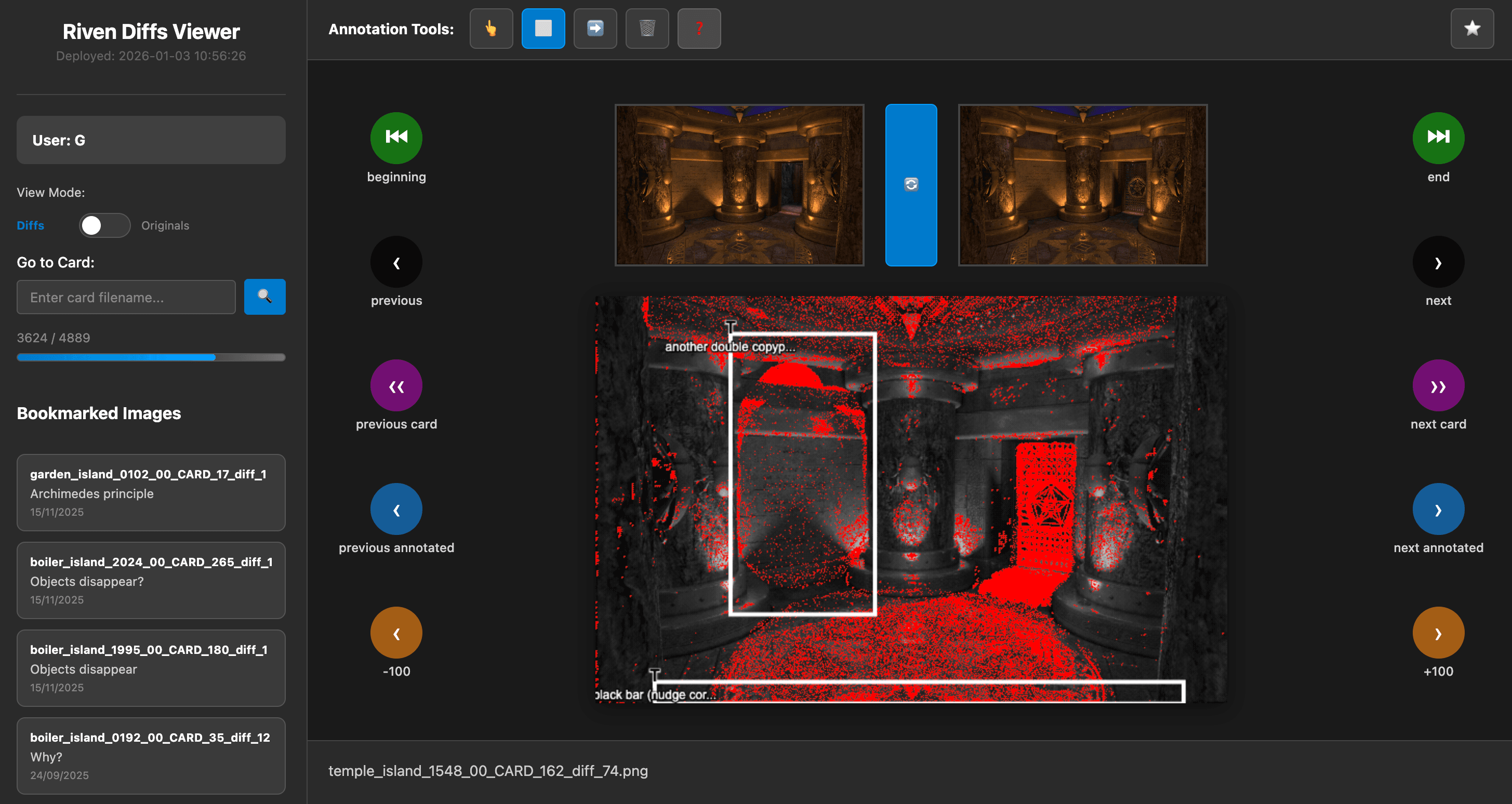

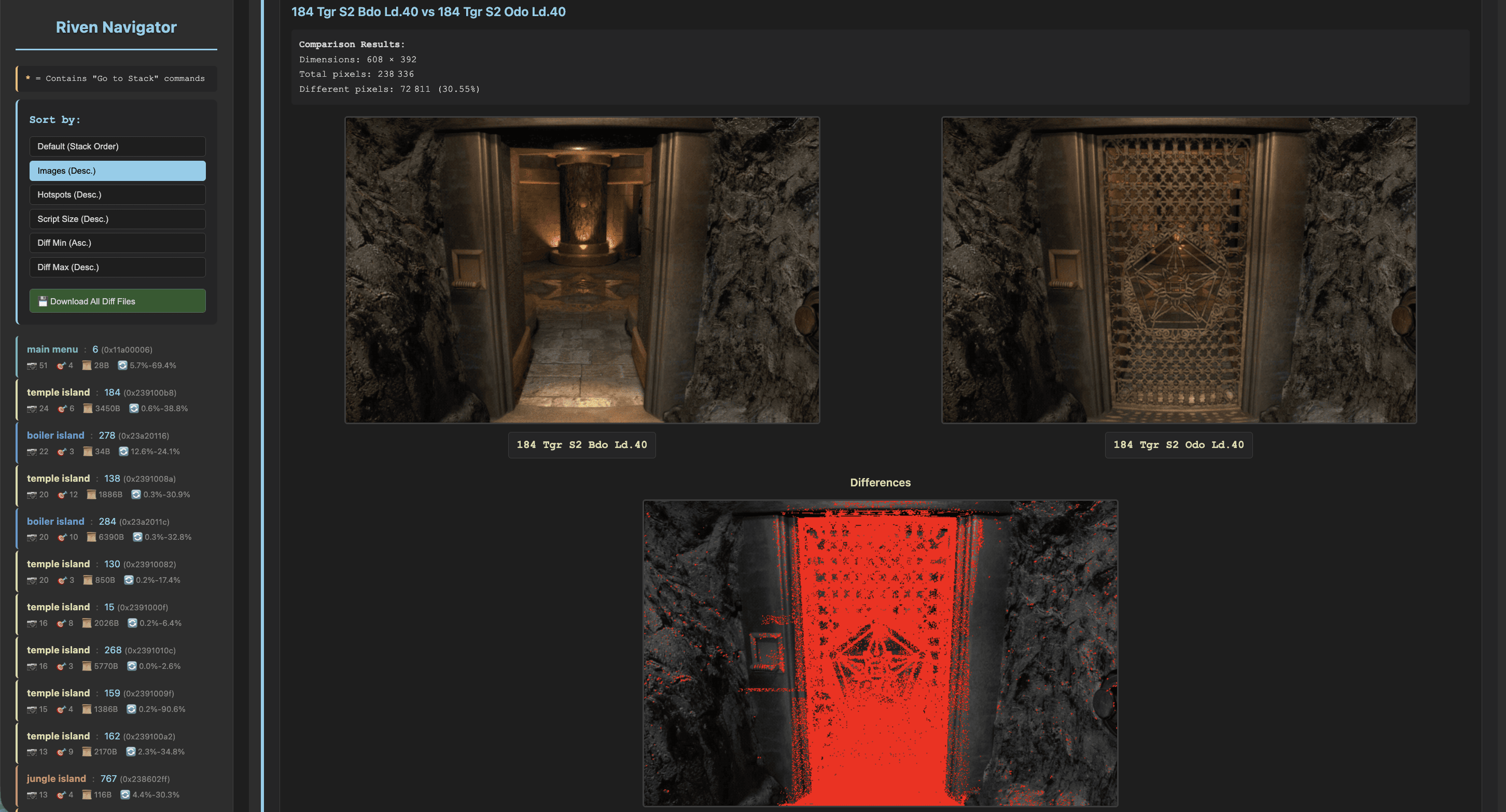

Interestingly, if delimiters are not sanitized from the prompt, they can make the model produce text that appears to be meaningless. This recent web article on “Anomalous Tokens in DeepSeek-V3 and r1” has a Section on special tokens. It shows how, when the user maliciously uses a delimiter as a first-order expression (so: in a prompt), it is erroneously interpreted by the model as a transition from or to a higher-order expression. In other words, when the prompt is not sufficiently sanitized, the flow of a model can be broken by injecting delimiters. In that sense, it is similar (all things considered) to the class of SQL injections that use unique quotation marks. While these SQL injections manipulate the transition between what is evaluated and what is executed, the prompt injections aim at making a model transition to a different semantic dimension.

This behavior is easy to reproduce. I asked a simple question to llama-3.2-3b-instruct and injected representations of delimiters in our first-order prompt: “Why does DeepSeek need and ?” While the model correctly identified the purpose of the question, reproducing the delimiters in its response transformed them into delimiters that de facto led it into higher-order reasoning: it thought he had to think about it.

(I highlight the first places where the model broke).

LLM Delimiters Not Taken Seriously, Yet

The implications of the relationship between delimiters, first-order expressions, and higher-order expressions are poorly understood in the context of creating LLM models. To address the issues related to the misuse of delimiters by users, the solutions can still be unsophisticated. For instance, from the DeepSeek R1 repository:

Additionally, we have observed that the DeepSeek-R1 series models tend to bypass thinking pattern (i.e., outputting "\n\n") when responding to certain queries, which can adversely affect the model's performance. To ensure that the model engages in thorough reasoning, we recommend enforcing the model to initiate its response with "\n" at the beginning of every output.

Conclusion

We have seen that delimiters are crucial for LLMs to differentiate between first-order and higher-order expressions, explaining why there is a general convergence towards using delimiters regarding the creation of models. Consequently, misuse of delimiters can lead to unexpected behavior or errors in the model, making them “derail” from a given order to another. Because LLMs are evaluators and generators of languages, I believe that LLM models could be greatly improved by studying how models process delimiters and switch between first-order and higher-order expressions. Also, I recommend documenting the delimiters more systematically when a model is released.